If you’re a CIO, encryption probably feels like one of those things that’s already “handled.” It works, it’s compliant, and it’s rarely the thing breaking your systems.

Quantum-resistant encryption, also known as post-quantum cryptography (PQC), is about making sure the encryption you rely on today doesn’t quietly become useless tomorrow. In simple terms, it’s preparing your security stack for a future where quantum computers can break today’s cryptography.

This is not the time to panic. It’s time to start planning. Your data lives longer than your technology cycles. Customer records, IP, contracts, and regulated information don’t disappear in a year or two. If that data is exposed later, the impact still sits with you.

The uncomfortable part is that most enterprises don’t actually know where cryptography sits across their stack. So “upgrading encryption” isn’t a neat project you can hand off. It’s a roadmap problem. And that’s exactly why CIOs are being pulled into this conversation now.

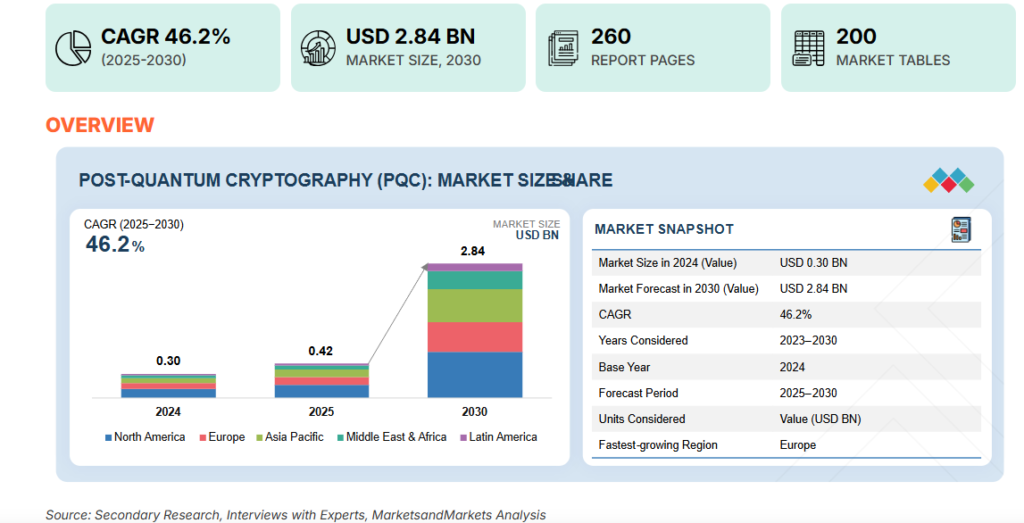

The global post-quantum cryptography (PQC) market is projected to grow from about USD 0.42 billion in 2025 to USD 2.84 billion by 2030, expanding at a CAGR of ~46.2 % as organizations accelerate quantum-safe initiatives.

Alt Text: Post-quantum cryptography market growth to USD 2.84 billion by 2030

What CIOs Should Know About Quantum-Resistant Encryption

This is the short version of what’s going on, without the theory or the hype.

What quantum-resistant encryption means in practice

Quantum-resistant encryption, or post-quantum cryptography, is about protecting the data you’re encrypting today from the computers that will exist tomorrow. If your organisation stores information that needs to stay private for years, the way you encrypt it now will eventually matter.

Why today’s encryption has a shelf life

The cryptography most enterprises rely on was built for current threat models. It works well against today’s attackers. But encryption standards don’t age forever. Over time, what’s considered “secure enough” changes. Quantum computing is one of the reasons that change is now part of long-term planning, not just academic discussion.

Why this becomes a planning issue now

Data doesn’t disappear when systems get replaced. Customer records, contracts, IP, and regulated data often stay in storage long after the platforms that created them are retired. If that data is collected today and exposed later, the impact still sits with the organisation. That’s why encryption strategy quietly becomes a long-horizon risk decision.

What CIOs can realistically start with

No one upgrades cryptography across an enterprise in one move. The practical starting point is visibility. Knowing where encryption exists across applications, vendors, and platforms makes it possible to prioritise change instead of reacting to it later.

How to think about readiness over time

Post-quantum readiness shows up as a gradual shift in how security architecture evolves. It’s something you design for over multiple cycles, alongside modernisation efforts, rather than a one-off security project you “finish” and move on from.

What Is Post-Quantum Cryptography and Why Does It Matter for Enterprises?

Let’s cut through the terminology first, because this topic gets abstract very quickly. For most CIOs, post-quantum cryptography doesn’t focus on algorithms. The focus is on whether the data you’re responsible for today will still be protected years from now.

What post-quantum cryptography looks like in the real world

Post-quantum cryptography, or PQC, is simply a way of designing encryption so it doesn’t fall apart as computing power evolves. In practice, that shows up as changes to how applications, platforms, and vendors handle encryption under the hood. You’re not “adding quantum” to your stack. You’re making sure your security foundations don’t age badly.

Why nobody is replacing encryption overnight

If you’ve ever tried to change something foundational across your IT estate, you already know how this goes. Encryption sits everywhere. In apps you own. In platforms you don’t. In integrations no one remembers building. So this is not a switch you flip. Instead, it comprises of a set of changes that happen gradually, usually alongside other modernisation work.

Why long-life data is where the risk quietly sits

Some data sticks around for a long time. Customer records. Contracts. Financial histories. IP that still matters years later. That’s where quantum-resistant encryption actually becomes relevant. Not because something breaks tomorrow, but because those records are still sensitive long after today’s systems are retired.

Which kinds of data tend to carry the most exposure

If you map this out, the riskiest data is not mysterious. It’s actually the stuff you already worry about. Personally identifiable information. Regulated records. Financial data. Core intellectual property. These are the assets that quietly build long-term risk if the way they’re protected can’t evolve.

Why this ends up on the CIO’s desk

This problem is not specific to a team. It cuts across architecture, vendors, legacy systems, and long-term platform strategy. That’s why it usually lands with the CIO. It’s about how the organisation changes its security foundations over time, not just what tool gets deployed next.

So if this plays out over years, why does it belong on your agenda now?

Why the Quantum Threat Timeline Is Already a CIO Problem

This is where the timeline often gets misunderstood. Most conversations frame quantum risk as something to worry about “someday.” In reality, the exposure starts building much earlier, and it builds quietly.

What “harvest-now, decrypt-later” actually looks like

Attackers don’t need quantum computers today to create future risk. They can collect encrypted data now and simply hold on to it. The assumption is that decryption will become easier over time as cryptographic standards weaken. From a CIO’s point of view, this means data you protect today can still become tomorrow’s liability.

Why future exposure starts with present-day data

If your organisation stores customer records, contracts, IP, or regulated information, that data doesn’t suddenly lose value when systems get upgraded. It stays sensitive for years. The longer its lifespan, the bigger the window of exposure becomes. That’s where the mismatch starts to matter. Your data lifecycle is long. Cryptographic lifecycles are much shorter.

Where compliance and regulatory risk show up first

Long before quantum computing becomes common, regulators will start to pose tough issues about how to preserve data for a long time. Expectations about making security controls last will change, especially in fields that deal with sensitive or regulated data. That’s usually when this stops being a theoretical security conversation and starts becoming a governance issue.

Why “we’ll deal with it later” gets expensive

Deferring this kind of change doesn’t make it smaller. It makes it harder. Cryptography is built into systems and platforms from vendors. The longer it goes without being looked at, the more likely it is to break. When change is imposed later, it usually shows up as quick migrations, disruptions that weren’t planned, and increased expenses.

So if the risk is structural and builds over time, what actually breaks first?

Why Most Enterprises Are Not Ready for Post-Quantum Cryptography

Most enterprises agree, at least in principle, that post-quantum cryptography makes sense. The gap shows up when they try to imagine what changing cryptography across their environment would actually look like. That’s when things start to feel complicated very quickly.

The invisible sprawl of cryptography across the estate

Encryption is everywhere, but it rarely shows up on anyone’s map. It lives inside applications, vendor platforms, libraries, APIs, and old integrations that no one actively tracks anymore. Some of it sits in systems that haven’t been touched in years. Some of it is buried inside third-party software where teams don’t control the implementation details.

Because this sprawl is invisible, most organisations don’t have a clear cryptographic inventory. And without that visibility, it’s hard to even estimate what “upgrading cryptography” would involve. The risk doesn’t involve just missing a few systems. It involves not knowing how many dependencies will surface once change begins.

The operational weight behind cryptographic change

Changing cryptography sounds like a security task. In reality, it pulls in architecture, operations, vendors, testing pipelines, and support teams. Encryption choices are tied to authentication flows, key management, compliance controls, and vendor contracts. Touch one piece, and several others move with it.

This is where technical debt shows up. Older systems don’t adapt easily to new cryptographic standards. Teams end up working around limitations instead of fixing them cleanly. Over time, this turns post-quantum readiness into a broader modernisation challenge.

So where does a CIO even begin?

How CIOs Can Build a Practical Roadmap for Post-Quantum Readiness

This is the part most CIOs care about. Not the theory. The “what do I actually do without breaking everything” part.

Picking algorithms in isolation is not how Post-quantum readiness can be defined. In practice, it’s about getting your organisation into a position where cryptography can change without becoming a fire drill every time standards evolve. That’s the real goal of a roadmap. Flexibility first. Migration later.

Start with visibility, not technology

Before choosing tools or standards, most teams need a basic map of where cryptography lives today. Which applications use encryption. Which vendors manage keys. Which systems rely on older libraries. This kind of cryptographic inventory sounds dull, but it changes the conversation. Once you can see the surface area, planning stops being guesswork.

Use risk to decide where to move first

Not all data carries the same long-term risk. Some records can age out safely. Others can’t. Applying simple risk tiering helps prioritise where post-quantum changes matter most. Long-life data, regulated information, and core IP tend to surface first. This keeps the roadmap focused instead of overwhelming.

Run small pilots before anything enterprise-wide

Trying to change cryptography across the estate in one move is a recipe for disruption. Contained pilots let teams test how systems behave when encryption changes underneath them. This is where operational surprises show up early, while the blast radius is still small.

Bring vendors into the conversation early

A lot of cryptography sits in platforms you don’t fully control. Engaging vendors on their post-quantum roadmaps avoids last-minute dependency issues later. It also helps you separate what you can plan internally from what you’ll need external timelines for.

Treat this as a roadmap, not a one-off project

Post-quantum readiness works best when it’s woven into broader security and platform modernisation efforts. When teams align around ownership, timelines, and priorities, change becomes incremental instead of reactive. Over the next 12 months, your goal is not migration. It’s building the foundations that make future change boring, predictable, and low-risk.

Conclusion

It’s not so much about picking the “right” algorithm for post-quantum preparation as it is about how your company handles long-term risk. CIOs who start early don’t want to guess what will happen in the future. They’re making things less chaotic in the future. Building visibility, alignment, and crypto-agility today will stop this from turning into a costly, hasty scramble later.

If you’re trying to figure out how to turn new tech changes like post-quantum cryptography into real, useful plans, Zamun can help you cut through the noise and come up with the best plan for your leadership team.

In practical terms, it helps ensure that information you protect today doesn’t become readable years down the line.

Preparation works best when it starts early. You don’t need to migrate everything now, but building visibility into where cryptography sits across your systems helps avoid rushed decisions later.

This refers to the idea that encrypted data can be collected today and decrypted in the future as cryptographic standards weaken.

NowTheNext Glossary

Click a term to view its meaning.